Final ID: WE488

A Comparison of English and Spanish AI Chatbot Responses to Online Cardiovascular Questions: Differences in Response Length, Readability, and Guidance

Abstract Body: Introduction

Large language models (LLMs) such as ChatGPT (OpenAI) and Gemini (Google) have become a dominant source of information. These tools contain nuanced and personalized information; they are also prone to hallucinations, societal biases, and sycophancy. While studies suggest LLMs are capable of providing high quality health information, less is known how this information varies by language and reading level. Because cardiovascular disease remains a leading cause of morbidity and mortality, and access to understandable health information is critical to promoting health equity, we studied the abilities of these models in providing approachable health information.

Methods

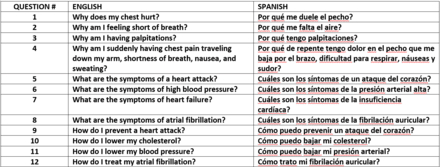

Twelve common cardiovascular questions were queried in English and Spanish using two popular sources for patient LLM usage, the ChatGPT (4o) and Google’s AI summaries (Table 1). Response length, sentence length, disclaimers, and clinical guidance elements were recorded and analyzed using descriptive statistics and paired t-tests were performed to assess between-language differences. Readability was assessed using validated tools - Flesch-Kincaid Grade Level for English and Fernández-Huerta index for Spanish.

Results

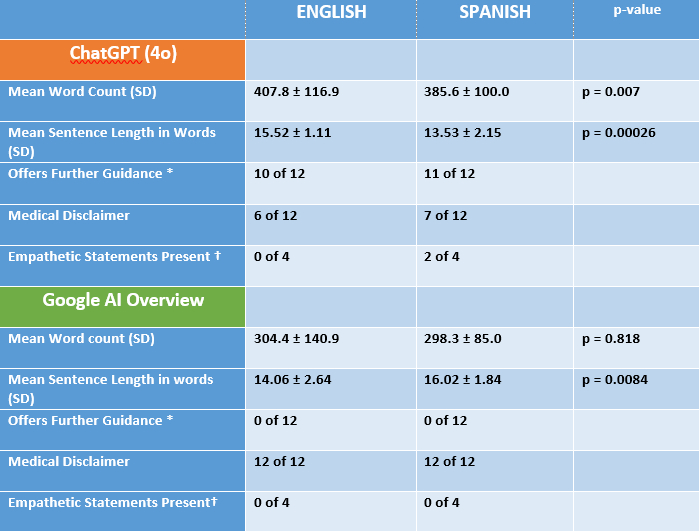

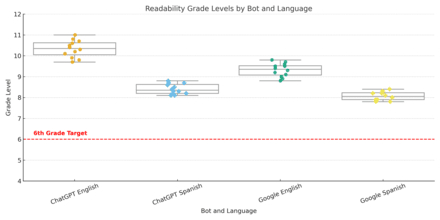

Across 48 responses, ChatGPT produced longer answers than Google in both languages. Mean sentence length was significantly longer in ChatGPT English compared with ChatGPT Spanish. Conversely, Google responses were slightly longer in Spanish. ChatGPT offered further medical guidance in 10 of 12 English and 11 of 12 Spanish responses, whereas Google provided none. Medical disclaimers were present in all Google responses and in approximately half of ChatGPT responses. Readability was above the recommended 6th grade level across languages and platforms.

Conclusion

In conclusion, AI response length, readability, and guidance to cardiovascular questions varied by language and platform. AI companies should target health-related outputs to reading levels that are appropriate to users. Our finding of decreased medical disclaimers is consistent with other work in this field. Further research should evaluate the quality of responses in different languages with validated metrics. There are major limitations to our study, most notably that it had a small sample size, and our evaluation did not analyze quality or meaning. Greater attention to readability and linguistic tailoring of AI outputs may enhance equitable access to understandable online health information.

Large language models (LLMs) such as ChatGPT (OpenAI) and Gemini (Google) have become a dominant source of information. These tools contain nuanced and personalized information; they are also prone to hallucinations, societal biases, and sycophancy. While studies suggest LLMs are capable of providing high quality health information, less is known how this information varies by language and reading level. Because cardiovascular disease remains a leading cause of morbidity and mortality, and access to understandable health information is critical to promoting health equity, we studied the abilities of these models in providing approachable health information.

Methods

Twelve common cardiovascular questions were queried in English and Spanish using two popular sources for patient LLM usage, the ChatGPT (4o) and Google’s AI summaries (Table 1). Response length, sentence length, disclaimers, and clinical guidance elements were recorded and analyzed using descriptive statistics and paired t-tests were performed to assess between-language differences. Readability was assessed using validated tools - Flesch-Kincaid Grade Level for English and Fernández-Huerta index for Spanish.

Results

Across 48 responses, ChatGPT produced longer answers than Google in both languages. Mean sentence length was significantly longer in ChatGPT English compared with ChatGPT Spanish. Conversely, Google responses were slightly longer in Spanish. ChatGPT offered further medical guidance in 10 of 12 English and 11 of 12 Spanish responses, whereas Google provided none. Medical disclaimers were present in all Google responses and in approximately half of ChatGPT responses. Readability was above the recommended 6th grade level across languages and platforms.

Conclusion

In conclusion, AI response length, readability, and guidance to cardiovascular questions varied by language and platform. AI companies should target health-related outputs to reading levels that are appropriate to users. Our finding of decreased medical disclaimers is consistent with other work in this field. Further research should evaluate the quality of responses in different languages with validated metrics. There are major limitations to our study, most notably that it had a small sample size, and our evaluation did not analyze quality or meaning. Greater attention to readability and linguistic tailoring of AI outputs may enhance equitable access to understandable online health information.

More abstracts on this topic:

Adverse Cardiovascular Outcomes in the Postpartum Period Associated with Upbringing-Related Social Determinants of Health

Tolu-akinnawo Oluwaremilekun, Anuforo Anderson, Ezekwueme Francis, Ogunniyi Kayode, Awoyemi Toluwalase

A Cross-scale Causal Machine Learning Framework Pinpoints Mgl2+ Macrophage Orchestrators of Balanced Arterial GrowthHan Jonghyeuk, Kong Dasom, Schwarz Erica, Takaesu Felipe, Humphrey Jay, Park Hyun-ji, Davis Michael E